Portfolio Management with R

Enrico Schumann [PDF] [PDF cropped] [Code]

1. Introduction

1.1. About PMwR

This book describes how to use the PMwR package. PMwR provides a small set of reliable, efficient and convenient tools that help in processing and analysing trade and portfolio data.

PMwR grew out of various pieces of software that I have

written since 2008, first at the University of Geneva, later

during my work at financial firms. The package has been and

still is under active development, but in its current form is

has become fairly stable, notably functions such as btest,

returns, journal and position. (I have written so much

code that relies on these functions that I cannot afford to

change them.) Nevertheless, several parts still need some

grooming, so I reserve the right to change them. Any such

changes will be announced in the NEWS file (including

instructions how to adapt exisiting code), and will be

reflected in this manual. I am grateful for comments and

suggestions. The book itself is written in Org mode.

The complete tangled code is available from the book's website.

The PMwR package is on CRAN; to install the package, simply type

install.packages("PMwR")

in an R session. The development version of the package is available from http://enricoschumann.net/R/packages/PMwR/. To get the development version from within R, use the following command:

install.packages("PMwR", repos = c('http://enricoschumann.net/R', getOption('repos')))

The package depends on several other packages, which are automatically obtained from the same repository and from CRAN. The package's source code is also pushed to public repositories at

https://git.sr.ht/~enricoschumann/PMwR

and

https://gitlab.com/enricoschumann/PMwR

and

https://github.com/enricoschumann/PMwR.

Recent versions of the package (since 0.3-4, released

in 2016) are pure R code and can be built without any

prerequisites except an R installation; older versions

contained C code, so you needed to have the necessary tool

chain installed (typically by installing Rtools).

Closer to finance, there is the Ledger project.

1.2. Principles

- small

- The aim of PMwR is to provide a small set of tools.1 This comes at the price: interfaces might be more complicated, with arguments being overloaded. But with only few functions, it is easier to remember a function name or to find it in the first place.

- flexible and general

- PMwR aims to be open to different types of instruments, different timestamps, etc. (In this respect, the zoo package is a rolemodel for how a package should work: blend well with standard data structures, be idiomatic, flexible with regards to date/time classes, …)

- functional

To quote from chapter 1 of K&R: `With properly designed functions, it is possible to ignore how a job is done; knowing what is done is sufficient'. PMwR emphasizes computations: transforming simple and transparent data structures into other data structures. In R, a computation means calling a function. There are many good reasons for using functions.

- clearer code; code is easier to reuse and to maintain

- functions provide a clear view of what is needed for a specific computation (i.e. the function arguments), and so functions help with parallel/distributed computing

- it is easier to test functionality

- because of R's pass-by-value principle, input data is not changed, which makes code more reliable

- clean workspace after function call has ended

There are even more advantages, actually; such as the application of techniques such as memoisation.

Computations provided by PMwR do not – for developers: should not – rely on global options/settings. The exception are functions that are used interactively, which essentially means

printmethods. (In scripts or methods,catis preferred.)- matching by name

- Whenever possible and intuitive, data should be matched by name, not by position. This is most natural with vectors that store scalar information about instruments, such as prices or multipliers. In such cases, data input is preferred in the form of named vectors. (In other languages, we would use a hashtable instead of a vector.)

- vectorization

Functions should do vectorization when it is beneficial in terms of speed or clarity of code. Likewise, functions should work on matrices directly (typically columnwise) when it simplifies or speeds up things. Otherwise, applying the function (i.e. looping) should be left to the user.

An example may clarify this: drawdown (actually in the NMOF package) is internally computed through

cumsum, so it will be fast for a single vector. But for a matrix of time series, it would need a loop, which will be left to the user. On the other hand, returns can be computed efficiently for a matrix, so functionreturnsdirectly handles matrices of prices.

1.3. Other packages

Several other packages originated from PMwR; initially, much of their code had been part of PMwR. Most of those packages are on CRAN as well.

datetimeutils- \iRpackageNoShow{datetimeutils} From the

DESCRIPTIONfile: Utilities for handling dates and times, such as selecting particular days of the week or month, formatting timestamps as required by RSS feeds, or converting timestamp representations of other software (such as 'MATLAB' and 'Excel') to R. The package is lightweight (no dependencies, pure R implementations) and relies only on R's standard classes to represent dates and times ('Date' and 'POSIXt'); it aims to provide efficient implementations, through vectorisation and the use of R's native numeric representations of timestamps where possible.

https://github.com/enricoschumann/datetimeutils

http://enricoschumann.net/R/packages/datetimeutils/ mailtools- \iRpackageNoShow{mailtools} Utilities

for handling email.

https://github.com/enricoschumann/mailtools

http://enricoschumann.net/R/packages/mailtools/ textutils- \iRpackageNoShow{textutils} Utilities for

handling character vectors that store human-readable text

(either plain or with markup, such as HTML or LaTeX). The

package provides, in particular, functions that help with

the preparation of plain-text reports, e.g. for expanding

and aligning strings that form the lines of such

reports. The package also provides generic functions for

transforming R objects to HTML and to plain text.

https://github.com/enricoschumann/textutils

http://enricoschumann.net/R/packages/textutils/ tsdb- \iRpackageNoShow{tsdb} A terribly-simple data base

for numeric time series, written purely in R, so no external

database-software is needed. Series are stored in plain-text

files (the most-portable and enduring file type) in CSV

format. Timestamps are encoded using R's native numeric

representation for 'Date'/'POSIXct', which makes them fast

to parse, but keeps them accessible with other software. The

package provides tools for saving and updating series in

this standardised format, for retrieving and joining data,

for summarising files and directories, and for coercing

series from and to other data types (such as 'zoo'

series).

https://github.com/enricoschumann/textutils/

http://enricoschumann.net/R/packages/textutils

1.4. Setting up R

In this manual, all R output will be presented in English. In case you run R in a different locale, but want to receive messages in English, type this:

Sys.setenv(LANGUAGE = "en")

Or, since R version 4.2:

Sys.setLanguage(lang = "en")

1.5. Typographical conventions

R code is shown in a typewriter font like this.

1+1

The results of a computation are shown as follows:

[1] 2

2. Keeping track of transactions: journals

2.1. Overview

The ultimate basis of many financial computations are lists

of transactions. PMwR provides an S3 class journal

for handling such lists. A journal is a list of atomic

vectors, to which a class attribute is attached. (Thus, a

journal is similar to a data-frame.2) Such a list is

created through the function journal. Methods should not

rely on this list being sorted in any particular way:

components of a journal should always be retrieved by name,

never by position. (In this respect a journal differs from a

data-frame, for which we can meaningfully refer to the

n-th column.)

A journal's components, such as amount or timestamp, are

called fields in this manual.

The simplicity of the class is intended, because it is meant

for interactive analyses. The user may – and is expected to

– dissect the information in a journal at will; such

dissections may include removing the class attribute.

2.2. Fields

What is actually stored in a journal is up to the user. A

number of fields are, however, required for certain

operations and so it is recommended that they be present:

amount- The notional amount that is transacted.

amountis, in a way, the most important property of a journal. When functions compute statistics from the journal (the number of transactions, say), they will often look atamount. timestamp- When did the transaction take place? A numeric or character vector; should be sortable.

price- Well, price. Well, there are many types of prices. The price specified in a journal can be used to compute profit/loss, so the difference between prices should be proportional to profit/loss for the transactions. Unfortunately, there are many instruments that are not quoted in transaction prices: Options may be quoted in implied volatility, say, or bonds in yield. For such instruments, the net-asset value (NAV) should be used.

instrument- Description of the financial instrument; typically an identifier, a.k.a. ticker or symbol. That is, a string or perhaps a number;3 but not a more-complex object (recall that journals are lists of atomic vectors).

id- A transaction identifier, possibly but not necessarily unique.

account- Description of the account (a string or perhaps a number).

...- other fields. They must be named, as in

fees = c(1, 2, 1).

All fields except amount can be missing. Such missing values

will be `added back' as NA with the exception of id and

account, which will be NULL. To be clear: amount could

be a vector of only NA values, but it cannot be left out

when the journal is created. (This will become clearer with

the examples below.)

A journal may have no transactions at all in it. In such a case all fields have length zero,

e.g. amount would be numeric(0). Such empty journals can

be created by saying journal() or by coercing a zero-row

data-frame to a journal, via a call to as.journal.

Transactions in a journal may be organised in hierarchies, such as

account => subaccount => subsubaccont => ... => instrument

This is useful and necessary when you have traded an

instrument for different accounts, say, or as part of

different strategies. Such a hierarchy may be

completely captured in the instrument field, by

concatenating account hierarchy and instrument using a

separator pattern such as ::.4 The result would

be `namespaced' instruments such as

Pension::Equities::AMZN. Alternatively, part of the

hierarchy may be stored in the account field.

2.3. Creating and combining journals

The function journal creates journal objects. See ?journal

for details about the function and about methods for journal objects.

At its very minimum, a journal must contain amounts of something.

J <- journal(amount = c(1, 2, -2, 3))

J

amount 1 1 2 2 3 -2 4 3 4 transactions

Actually, that is not true. Sometimes it is useful to

create an empty journal, one with no entries at

all. You can do so by saying journal(), without any

arguments.

journal()

no transactions

To see the current balance, which is nothing more than

the sum over all amounts, you can use position.

position(J)

4

Suppose you wanted to note how many bottles of milk and wine you have stored in your cellar. Whenever you add to your stock, you have a positive amount; whenever you retrieve bottles, you have a negative amount. Then, by keeping track of transactions, you do not have to take stock (apart, perhaps, from occasional checking that you did not miss a transaction), as long as you keep track of what you put into your cellar and what you take out.

There may be some analyses you can do on flows alone: you may check your drinking habits for patterns, such as slow accumulation of wine, followed by rapid consumption; or the other way around. But typically, you will want to analyse your transactions later, and then the more information you record about them – when, what, why, at what price, etc. –, the better. Journals allow you to store such information. To show how they are used, let us switch to a financial example.

Suppose you have transacted the following trades.

| timestamp | account | instrument | amount | price | note | |------------+---------+------------+--------+---------+-------------| | 2017-08-01 | Pension | AMZN | 10 | 1001.00 | | | 2017-08-01 | Pension | MSFT | 220 | 73.10 | | | 2017-07-14 | Trading | AMZN | 10 | 1001.50 | | | 2017-07-31 | Trading | AMZN | -5 | 1014.00 | take profit | | 2017-08-15 | Trading | AMZN | 10 | 985.50 | | | 2017-10-05 | Pension | MSFT | 70 | 74.40 | |

This table is formatted in Org syntax, and throughout this chapter

we will present trade information in this format. The function

\iRfunction{org\_journal} converts such a table into a

journal.

org_journal <- function(file, text, timestamp.as.Date = TRUE) { ans <- orgutils::readOrg(text = text) if (timestamp.as.Date && "timestamp" %in% colnames(ans)) ans$timestamp <- as.Date(ans$timestamp) ans <- as.journal(ans) ans }

We read the table into a journal.

J <- org_journal(text = " | timestamp | account | instrument | amount | price | note | |------------+---------+------------+--------+---------+-------------| | 2017-08-01 | Pension | AMZN | 10 | 1001.00 | | | 2017-08-01 | Pension | MSFT | 220 | 73.10 | | | 2017-07-14 | Trading | AMZN | 10 | 1001.50 | | | 2017-07-31 | Trading | AMZN | -5 | 1014.00 | take profit | | 2017-08-15 | Trading | AMZN | 10 | 985.50 | | | 2017-10-05 | Pension | MSFT | 70 | 74.40 | | ") J

instrument timestamp amount price account note 1 AMZN 2017-08-01 10 1001.0 Pension 2 MSFT 2017-08-01 220 73.1 Pension 3 AMZN 2017-07-14 10 1001.5 Trading 4 AMZN 2017-07-31 -5 1014.0 Trading take profit 5 AMZN 2017-08-15 10 985.5 Trading 6 MSFT 2017-10-05 70 74.4 Pension 6 transactions

A print method defines how a journal is displayed. See

?print.journal for details. In general, you can always get help

for methods for generic functions by saying

?<generic_function>.journal, e.g. ?print.journal or

?as.data.frame.journal.

print(J, max.print = 2, exclude = c("account", "note"))

instrument timestamp amount price 1 AMZN 2017-08-01 10 1001.0 2 MSFT 2017-08-01 220 73.1 [ .... ] 6 transactions

A str method shows the fields in the journal.

str(J)

'journal': 6 transactions $ instrument: chr [1:6] "AMZN" "MSFT" "AMZN" "AMZN" ... $ account : chr [1:6] "Pension" "Pension" "Trading" ... $ timestamp : Date[1:6], format: "2017-08-01" ... $ amount : int [1:6] 10 220 10 -5 10 70 $ price : num [1:6] 1001 73.1 1001.5 1014 985.5 ... $ note : chr [1:6] "" "" "" "take profit" ...

You may notice that the output is similar to that of a

data.frame or list. That is because J is a list

of atomic vectors, with a class attribute. Essentially,

it is little more than a list of the columns of the

above table.

But note that journal would silently have added

required fields such price, initialised as NA.

str(journal(amount = 1))

'journal': 1 transaction $ instrument: chr NA $ timestamp : num NA $ amount : num 1 $ price : num NA

In the example, the timestamps are of class Date. But

essentially, any vector of mode character or numeric can be used,

for instance POSIXct, or other classes. Here is an example that

uses the \iRpackage{nanotime} package \citep{eddelbuettel2017}.

library("nanotime") journal(amount = 1:3, timestamp = nanotime(Sys.time()) + 1:3)

timestamp amount

1 2020-08-09T08:27:19.812951001+00:00 1

2 2020-08-09T08:27:19.812951002+00:00 2

3 2020-08-09T08:27:19.812951003+00:00 3

3 transactions

Journals can be combined with c.

J2 <- J J2$remark <- rep("new", length(J)) c(J, J2)

instrument timestamp amount price account note remark

1 AMZN 2017-08-01 10 1001.0 Pension <NA>

2 MSFT 2017-08-01 220 73.1 Pension <NA>

3 AMZN 2017-07-14 10 1001.5 Trading <NA>

4 AMZN 2017-07-31 -5 1014.0 Trading take profit <NA>

5 AMZN 2017-08-15 10 985.5 Trading <NA>

6 MSFT 2017-10-05 70 74.4 Pension <NA>

7 AMZN 2017-08-01 10 1001.0 Pension new

8 MSFT 2017-08-01 220 73.1 Pension new

9 AMZN 2017-07-14 10 1001.5 Trading new

10 AMZN 2017-07-31 -5 1014.0 Trading take profit new

11 AMZN 2017-08-15 10 985.5 Trading new

12 MSFT 2017-10-05 70 74.4 Pension new

12 transactions

The new combined journal will not be sorted by date. In

general, a journal need not be sorted in any particular

way. There is a sort method for journals, whose

default is to sort by timestamp.

We can also sort by other fields, for instance by amount.

sort(c(J, J2), by = c("amount", "price"), decreasing = FALSE)

instrument timestamp amount price account note remark

1 AMZN 2017-07-31 -5 1014.0 Trading take profit <NA>

2 AMZN 2017-07-31 -5 1014.0 Trading take profit new

3 AMZN 2017-08-15 10 985.5 Trading <NA>

4 AMZN 2017-08-15 10 985.5 Trading new

5 AMZN 2017-08-01 10 1001.0 Pension <NA>

6 AMZN 2017-08-01 10 1001.0 Pension new

7 AMZN 2017-07-14 10 1001.5 Trading <NA>

8 AMZN 2017-07-14 10 1001.5 Trading new

9 MSFT 2017-10-05 70 74.4 Pension <NA>

10 MSFT 2017-10-05 70 74.4 Pension new

11 MSFT 2017-08-01 220 73.1 Pension <NA>

12 MSFT 2017-08-01 220 73.1 Pension new

12 transactions

You can query the number of transactions in a journal

with length.

2.4. Selecting transactions

In an interactive session, you can use subset to

select transactions.

subset(J, amount > 10)

instrument timestamp amount price account comment 1 MSFT 2017-08-01 220 73.1 Pension 2 MSFT 2017-10-05 70 74.4 Pension 2 transactions

With subset, you need not quote the expression that selects

trades and you can directly access a journal's fields. Because of

the way subset evaluates its arguments, it should not be used

within functions. (See the Examples section in ?journal for

what can happen then.)

More generally, to extract or change a field, use its name, either

through the $ operator or double brackets [[...]].5

J$amount

[1] 10 220 10 -5 10 70

You can also replace specific fields.

J$comment[1] <- "some note" J

instrument timestamp amount price account comment 1 AMZN 2017-08-01 10 1001.0 Pension a note 2 MSFT 2017-08-01 220 73.1 Pension 3 AMZN 2017-07-14 10 1001.5 Trading 4 AMZN 2017-07-31 -5 1014.0 Trading take profit 5 AMZN 2017-08-15 10 985.5 Trading 6 MSFT 2017-10-05 70 74.4 Pension 6 transactions

The `[` method works with integers or logicals, returning

the respective transactions.

J[2:3]

instrument timestamp amount price account comment 1 MSFT 2017-08-01 220 73.1 Pension 2 AMZN 2017-07-14 10 1001.5 Trading 2 transactions

J[J$amount < 0]

instrument timestamp amount price account comment 1 AMZN 2017-07-31 -5 1014 Trading take profit 1 transaction

You can also pass a string, which is then interpreted as a regular expression that is matched against all character fields in the journal.

J["Pension"]

instrument timestamp amount price account comment 1 AMZN 2017-08-01 10 1001.0 Pension a note 2 MSFT 2017-08-01 220 73.1 Pension 3 MSFT 2017-10-05 70 74.4 Pension 3 transactions

You can also specify the fields to match the string against.

J["Pension", match.against = "instrument"]

no transactions

By default, case is ignored, but you can set ignore.case to

FALSE. (Also supported are arguments fixed, perl and

useBytes, to be passed to grepl, with default FALSE.)

J["pension", ignore.case = FALSE]

no transactions

Finally, you can invert the selection with invert.

J["Pension", invert = TRUE]

instrument timestamp amount price account comment 1 AMZN 2017-07-14 10 1001.5 Trading 2 AMZN 2017-07-31 -5 1014.0 Trading take profit 3 AMZN 2017-08-15 10 985.5 Trading 3 transactions

2.5. Computing balances

2.5.1. Computing positions from journals

The function position gives the current balance of all

instruments.

position(J)

2017-10-05

AMZN 25

MSFT 290

To get the position at a specific date, use the when argument.

position(J, when = as.Date("2017-08-10"))

2017-08-10

AMZN 15

MSFT 220

If you do not like such a tabular view, consider splitting the journal.

lapply(split(J, J$instrument),

position, when = as.Date("2017-08-10"))

$AMZN

2017-08-10

AMZN 15

$MSFT

2017-08-10

MSFT 220

The split method breaks up a journal according to a `factor`

(here, the instrument field) into a list of journals. This is

often useful in interactive sessions, to have information per

sub-journal printed.

split(J, J$instrument)

$AMZN instrument timestamp amount price account note 1 AMZN 2017-08-01 10 1001.0 Pension 2 AMZN 2017-07-14 10 1001.5 Trading 3 AMZN 2017-07-31 -5 1014.0 Trading take profit 4 AMZN 2017-08-15 10 985.5 Trading 4 transactions $MSFT instrument timestamp amount price account note 1 MSFT 2017-08-01 220 73.1 Pension 2 MSFT 2017-10-05 70 74.4 Pension 2 transactions

To get a time series of positions, you can use specific keywords

for when: all will print the position at all timestamps in

the journal.

position(J, when = "all")

AMZN MSFT

2017-08-01 15 220

2017-07-14 10 0

2017-07-31 5 0

2017-08-15 25 220

2017-10-05 25 290

Keywords first and last give you the first and last

position. (The latter is the default; so if when is

not specified at all, the last position is computed.)

endofday computes the positions at the ends of

calendar days in the journal. endofmonth and endofyear

print the positions at the ends of all calendar

months and years between the first and the last timestamp.

(The function nth\day

in package datetimeutils offers more options.)

We are not limited to the timestamps that exist in the journal.

position(J, when = seq(from = as.Date("2017-07-10"), to = as.Date("2017-07-20"), by = "1 day"))

AMZN MSFT

2017-07-10 0 0

2017-07-11 0 0

2017-07-12 0 0

2017-07-13 0 0

2017-07-14 10 0

2017-07-15 10 0

2017-07-16 10 0

2017-07-17 10 0

2017-07-18 10 0

2017-07-19 10 0

2017-07-20 10 0

By default, position will show you positions of all instruments, even if they are zero.

position(J, when = as.Date("2017-7-15"))

2017-07-15

AMZN 10

MSFT 0

You can suppress such positions with drop.zero.

position(J, when = as.Date("2017-7-15"), drop.zero = TRUE)

2017-07-15

AMZN 10

drop.zero can also be a numeric value, in which case is it interpreted

as an absolute tolerance. This is useful in cases

such as this one:

position(journal(instrument = "USD", timestamp = as.Date("2012-01-05"), amount = c(0.1, 0.1, 0.1, -0.3)), drop.zero = TRUE)

2012-01-05

USD 2.7756e-17

position(journal(instrument = "USD", timestamp = as.Date("2012-01-05"), amount = c(0.1, 0.1, 0.1, -0.3)), drop.zero = 1e-12)

(Note that there is no output.)

As a final example, when accounts are specified, we may also aggregate positions by account.

position(J, use.account = TRUE)

Pension |-- AMZN 10 `-- MSFT 290 Trading `-- AMZN 15

As described above, each instruments gets its `namespace'.

as.data.frame(position(J, use.account = TRUE))

Pension.AMZN Pension.MSFT Trading.AMZN

2017-10-05 10 290 15

2.5.2. Algorithms for computing balances

Below follow two Perl snippets that compute positions from list of trades. (Perl syntax is similar to C syntax; in particular, array indices start at 0. This makes Perl very useful to test algorithms that are later to be coded in C.)

2.5.2.1. when and timestamp sorted

use warnings; use strict; use v5.14; my @when = (0,1,2,7); ## when to compute position my @timestamp = (0,0,0,2); ## timestamps of trades my @amount = (1,1,1,-2); ## traded amounts ## when and timestamp sorted my @pos = (0) x @when; ## same length as @when my $i = 0; my $j = 0; ## /* i loops over when; j loops over amount/timestamp */ for ($i = 0; $i < @when; $i++) { if ($i == 0) { $pos[$i] = 0; } else { $pos[$i] = $pos[$i - 1]; } while ($j < @amount && $timestamp[$j] <= $when[$i]) { $pos[$i] += $amount[$j]; $j += 1; } } say "@pos";

3 3 1 1

2.5.2.2. when and timestamp unsorted

use warnings; use strict; use v5.14; my @when = (0,1,2,7); ## when to compute position my @timestamp = (0,0,0,2); ## timestamps of trades my @amount = (1,1,1,-2); ## traded amounts my @pos = (0) x @when; ## same length as @when my $i = 0; my $j = 0; @pos = (0,0); for ($i = 0; $i < @when; $i++) { for ($j = 0; $j < @timestamp; $j++) { if ($timestamp[$j] <= $when[$i]) { $pos[$i] += $amount[$j]; } } } say "@pos";

3 3 1 1

2.6. Aggregating and transforming journals

Often the data provided by journals needs to be

processed in some way. A straightforward strategy is

to call as.data.frame on the journal and then to use

one of the many functions and methods that can be used

for data-frames, such as aggregate or apply.

Even without coercion to a data-frame: A journal is a

list of atomic vectors and hence already very similar

to a data-frame. As a consequence, many computations can

also be done directly on the journal, in particular

with tapply.

An example:

you have a journal trades and want to compute monthly

turnover (two-way). If there is only one instrument or

all instruments may be added without harm (typically

when they are denominated in the same currency), you

can use this expression:

tapply(trades,

INDEX = format(trades$timestamp, "%Y-%m"),

FUN = function(x) sum(abs(x$amount)))

To break it down by instrument, just add instrument as

a second grouping variable to the INDEX argument.

tapply(trades,

INDEX = list(format(trades$timestamp, "%Y-%m"),

trades$instrument),

FUN = function(x) sum(abs(x$amount)))

A special case is when a journal is to be processed

into a new journal. For this, PMwR defines an

aggregate method for journals:

aggregate.journal(x, by, FUN, ...)

The method splits the journal according to the

grouping argument by, which can be a list (as in

the default method) or an atomic vector.

The argument FUN can either be a function or list. If

a function, it should receive a journal and also

evaluate to a journal. (Note that this is different

from R's aggregate.data.frame, which calls FUN on

all columns, but in turn cannot address specific

columns of the data.frame.) If FUN is a list, its

elements should be named functions. The names should

match fields in the journal.

An example: we have a journal covering two trading days and wish to create a summary journal, which aggregates buys and sells for every day.

J <- org_journal(text = " | instrument | timestamp | amount | price | |------------+----------------+--------+-------| | A | 2013-09-02 Mon | -3 | 102 | | B | 2013-09-02 Mon | -3 | 104 | | B | 2013-09-02 Mon | 3 | 106 | | B | 2013-09-02 Mon | -2 | 104 | | A | 2013-09-03 Tue | -1 | 110 | | A | 2013-09-03 Tue | 1 | 104 | | A | 2013-09-03 Tue | 5 | 108 | | A | 2013-09-03 Tue | 3 | 107 | | B | 2013-09-03 Tue | -4 | 102 | | B | 2013-09-03 Tue | 3 | 106 | ") fun <- function(x) { journal(timestamp = as.Date(x$timestamp[1]), amount = sum(x$amount), price = sum(x$amount*x$price)/sum(x$amount), instrument = x$instrument[1L]) } aggregate(J, by = list(J$instrument, sign(J$amount), as.Date(J$timestamp)), FUN = fun)

The results is a journal, but with at most a single buy

or sell transaction per instrument per day: see the buy

transaction for instrument A on September, 3.

instrument timestamp amount price 1 A 2013-09-02 -3 102.0000 2 B 2013-09-02 -5 104.0000 3 B 2013-09-02 3 106.0000 4 A 2013-09-03 -1 110.0000 5 B 2013-09-03 -4 102.0000 6 A 2013-09-03 9 107.2222 7 B 2013-09-03 3 106.0000 7 transactions

3. Computing profit and loss

In this chapter we will deal with computing profit and loss (P/L) measured in amounts of currency. If you are interested in computing returns, see Section Computing returns.

3.1. Simple cases

3.1.1. Total profit/loss

We buy one unit of an asset at a price of 100 euro and we sell it for 101. We have made a profit of 1 euro.

This simple case is frequent enough that we should make

the required computation simple as well. The PMwR

package provides a function pl, which for this case

may be called as follows.

pl(price = c(100, 101), amount = c(1, -1))

P/L total 1 average buy 100 average sell 101 cum. volume 2 ‘P/L total’ is in units of instrument; ‘volume’ is sum of /absolute/ amounts.

Instead of a vectors price and amount, you could

also have passed a journal to pl.

In principle, profit/loss (P/L) is straightforward to compute. Let \(x\) be a vector of the absolute amounts traded, and let \(p\) be a vector of the prices at which we traded. Then P/L is just the difference between what we received when selling and what we paid when buying.

\begin{equation} \label{eq:pl1} \sum x^{\scriptsize\mathrm{sell}}_i p^{\scriptsize\mathrm{sell}}_i - \sum x^{\scriptsize\mathrm{buy}}_i p^{\scriptsize \mathrm{buy}}_i \end{equation}This can be simplified when we impose the convention that sold amounts are negative.

\begin{eqnarray} \label{eq:pl2} \mathrm{P/L} &=& -\sum_{x<0} x_i p_i - \sum_{x>0} x_i p_i \\ &=& -\sum x_i p_i \end{eqnarray}

The function pl also expects this convention: in the

code example we had \(x = [1, -1]'\).

There are several ways to perform this basic (or fundamental, rather) computation. Here are some, along with some timing results.

amount <- rep(c(-100, 100), 500) price <- rep(100, length(amount)) library("rbenchmark") benchmark( ## variations amount %*% price, sum(amount*price), crossprod(amount, price), t(amount*price) %*% rep(1, length(amount)), ## matrix summing ## settings columns = c("test", "elapsed", "relative"), order = "relative", replications = 50000)

test elapsed relative

1 amount %*% price 0.126 1.000

3 crossprod(amount, price) 0.138 1.095

2 sum(amount * price) 0.172 1.365

4 t(amount * price) %*% rep(1, length(amount)) 0.440 3.492

pl uses the straightforward sum(amount * price)

variant; only when very long vectors are used, it

switches to crossprod.

pl also accepts an argument instrument: if it is

available, pl computes and reports P/L for each

instrument separately. As an example, suppose you

traded shares of two German companies, Adidas and

Commerzbank. We collect the transactions in a journal.

J <- readOrg(text = " | instrument | amount | price | |-------------+--------+-------| | Adidas | 50 | 100 | | Adidas | -50 | 102 | | Commerzbank | 500 | 8 | | Commerzbank | -500 | 7 | ") J <- as.journal(J) J

instrument amount price

1 Adidas 50 100

2 Adidas -50 102

3 Commerzbank 500 8

4 Commerzbank -500 7

4 transactions

We now pass the journal directly to pl.

pl(J)

Adidas P/L total 100 average buy 100 average sell 102 cum. volume 100 Commerzbank P/L total -500 average buy 8 average sell 7 cum. volume 1000 ‘P/L total’ is in units of instrument; ‘volume’ is sum of /absolute/ amounts.

An aside: since the shares are denominated in the same currency (euro), total profit is the same even if we had left out the instruments; however, average buying and selling prices becomes less informative.

Financial instruments differ not only in the currencies in which they are denominated. Many derivatives have multipliers, which you may also specify. Suppose you have traded FGBL (German Bund futures) and FESX (EURO STOXX 50 futures). One point of the FGBL translates into 1000 euros; for the FESX it is 10 euros.

J <- readOrg(text = " | instrument | amount | price | |-------------+--------+--------| | FGBL MAR 16 | 1 | 165.20 | | FGBL MAR 16 | -1 | 165.37 | | FGBL JUN 16 | 1 | 164.12 | | FGBL JUN 16 | -1 | 164.13 | | FESX JUN 16 | 5 | 2910 | | FESX JUN 16 | -5 | 2905 | ") J <- as.journal(J) futures_pl <- pl(J, multiplier = c("^FGBL" = 1000, "^FESX" = 10), multiplier.regexp = TRUE) futures_pl

FESX JUN 16 P/L total -250 average buy 2910 average sell 2905 cum. volume 10 FGBL JUN 16 P/L total 10 average buy 164.12 average sell 164.13 cum. volume 2 FGBL MAR 16 P/L total 170 average buy 165.2 average sell 165.37 cum. volume 2 ‘P/L total’ is in units of instrument; ‘volume’ is sum of /absolute/ amounts.

Note that we used a named vector to pass the

multipliers. Per default, the names of this vector need

to exactly match the instruments' names. Setting

multiplier.regexp to TRUE causes the names of the

multiplier vector to be interpreted as (Perl-style)

regular expressions.

At this point, it may be helpful to describe how we can

access the results of such P/L computations (other than

having them printed to the console, that is). The

function pl always returns a list of lists – one

list for each instrument.

str(futures_pl)

List of 3 $ FESX JUN 16:List of 6 ..$ pl : num -250 ..$ realised : logi NA ..$ unrealised: logi NA ..$ buy : num 2910 ..$ sell : num 2905 ..$ volume : num 10 $ FGBL JUN 16:List of 6 ..$ pl : num 10 ..$ realised : logi NA ..$ unrealised: logi NA ..$ buy : num 164 ..$ sell : num 164 ..$ volume : num 2 $ FGBL MAR 16:List of 6 ..$ pl : num 170 ..$ realised : logi NA ..$ unrealised: logi NA ..$ buy : num 165 ..$ sell : num 165 ..$ volume : num 2 - attr(*, "class")= chr "pl" - attr(*, "along.timestamp")= logi FALSE - attr(*, "instrument")= chr [1:3] "FESX JUN 16" "FGBL JUN 16" "FGBL MAR 16"

Each such list contains numeric vectors: `pl',

'realised', 'unrealised', 'buy', 'sell',

'volume'. There may also be an additional vector,

timestamp, to be described later in Section PL over

time. The vectors

'realised' and 'unrealised' will be NA unless

along.timestamp is not FALSE, also described in Section

PL over time.

Data can be extracted by standard methods.

unlist(futures_pl[["FESX JUN 16"]])

pl realised unrealised buy sell volume -250 NA NA 2910 2905 10

unlist(lapply(futures_pl, `[[`, "volume"))

FESX JUN 16 FGBL JUN 16 FGBL MAR 16

10 2 2

You may prefer sapply(...) instead of

unlist(lapply(...)). Also, extracting the raw P/L

numbers of each instrument is so common that you can say

pl(pl(...)). So you could have written:

pl(pl(J,

multiplier = c("FGBL" = 1000, "FESX" = 10),

multiplier.regexp = TRUE))

FESX JUN 16 FGBL JUN 16 FGBL MAR 16

-250 10 170

It is often more convenient to have the data presented

as a table, which we can create with as.data.frame.

as.data.frame(futures_pl)

pl buy sell volume

FESX JUN 16 -250 2910.00 2905.00 10

FGBL JUN 16 10 164.12 164.13 2

FGBL MAR 16 170 165.20 165.37 2

Or if you like ASCII tables, with toOrg.

toOrg(as.data.frame(futures_pl), row.names = "instrument")

| instrument | pl | buy | sell | volume | |-------------+------+--------+--------+--------| | FESX JUN 16 | -250 | 2910 | 2905 | 10 | | FGBL JUN 16 | 10 | 164.12 | 164.13 | 2 | | FGBL MAR 16 | 170 | 165.2 | 165.37 | 2 |

We can also use pl when there are open positions.

The simplest example is a journal of just one trade.

pl(amount = 1, price = 100)

P/L total NA average buy 100 average sell NaN cum. volume 1 ‘P/L total’ is in units of instrument; ‘volume’ is sum of /absolute/ amounts. ‘sum(amount)’ is not zero: specify ‘vprice’ to compute P/L.

There can be no P/L number since the position is not

closed. But the message that is shown

tells us what to do: we need to specify a price at

which the open position is to be valued. This valuation

price is passed as argument vprice (v as in

valuation).

pl(amount = 1, price = 100, vprice = 105)

P/L total 5 average buy 100 average sell 105 cum. volume 1 'P/L total' is in units of instrument; 'volume' is sum of /absolute/ amounts. average sell includes 'vprice'

Note that average sell takes into account the valuation

price that we specified.6 But cum. volume has remained

1 since only 1 unit was actually traded.

A common task is to compute P/L over a specified period of time such as one trading day. The procedure for such a case requires three ingredients:

- the initial position and its valuation prices,

- the trades during the period,

- the final position and its prices.

Suppose yesterday, at market close, we had the following positions.

yesterday_position <- c("FESX JUN 16" = -20, "FGBL JUN 16" = 10) yesterday_prices <- c("FESX JUN 16" = 2912, "FGBL JUN 16" = 164.23)

Note that, as with the multipliers above, we use named vectors for both the position and the prices: the names indicate the instruments.

Trading just ended, and we have done the following trades.

J

instrument amount price

1 FGBL MAR 16 1 165.20

2 FGBL MAR 16 -1 165.37

3 FGBL JUN 16 1 164.12

4 FGBL JUN 16 -1 164.13

5 FESX JUN 16 5 2910.00

6 FESX JUN 16 -5 2905.00

6 transactions

Now we pass the three ingredients – initial position,

trades during the period, and valuation prices for the

final, open positions – to pl.

pl(J, initial.position = yesterday_position, initial.price = yesterday_prices, vprice = c("FESX JUN 16" = 2902, "FGBL JUN 16" = 164.60), multiplier = c("FGBL" = 1000, "FESX" = 10), multiplier.regexp = TRUE)

FESX JUN 16 P/L total 1750 average buy 2903.6 average sell 2910.6 cum. volume 10 FGBL JUN 16 P/L total 3710 average buy 164.22 average sell 164.56 cum. volume 2 FGBL MAR 16 P/L total 170 average buy 165.2 average sell 165.37 cum. volume 2 ‘P/L total’ is in units of instrument; ‘volume’ is sum of /absolute/ amounts. for FESX JUN 16: average buy includes ‘vprice’ for FGBL JUN 16: average sell includes ‘vprice’

An aside: we could have simulated this computation by

creating one journal of the initial position and

another journal (with reversed amount signs) for the

final position, merging all three journals and then

computing P/L.

3.1.2. P/L over time

In the examples above, we computed total P/L. It is also illuminating to see how P/L evolved over time. Suppose that a stock trader bought one share at 50, one share at 90 and sold two shares at 100. These trades resulted in a profit of 60, or an average return of more than +40% (bought at an average price of 70, and sold at 100).

J <- journal(price = c( 90, 50, 100),

amount = c( 1, 1, -2))

pl(J)

P/L total 60 average buy 70 average sell 100 cum. volume 4 'P/L total' is in units of instrument; 'volume' is sum of /absolute/ amounts.

That may appear like some pretty good trading. Yet suppose that the order of the trades was

buy at 90 => buy at 50 => sell at 100.

You may have noticed that the journal that we created

above already has the trades ordered this way. We may

not know what was traded and when, but there is clearly

some information in the order of the trades and the

drawdown that it implies: namely a mark-to-market loss

of at least 40 before it recovered. For situations like

this, the argument along.timestamp can be used.

pl(J, along.timestamp = TRUE)

timestamp 1 2 3 P/L total 0 -40 60 __ realised 0 0 60 __ unrealised 0 -40 0 average buy 70 average sell 100 cum. volume 1 2 4 'P/L total' is in units of instrument; 'volume' is sum of /absolute/ amounts.

Note that we do not provide an actual timestamp, in

which case the function uses integers 1,

2, …, length(J). With no further arguments,

as here, the function computes the running position and

evaluates it at every trade with the trade's price. This

may not be totally accurate because of bid–ask spreads

or other transaction costs. But it provides more

information than only computing the aggregate P/L for

the trades.

str(pl(J, along.timestamp = TRUE))

List of 1 $ :List of 7 ..$ timestamp : int [1:3] 1 2 3 ..$ pl : num [1:3] 0 -40 60 ..$ realised : num [1:3] 0 0 60 ..$ unrealised: num [1:3] 0 -40 0 ..$ buy : num 70 ..$ sell : num 100 ..$ volume : num [1:3] 1 2 4 - attr(*, "class")= chr "pl" - attr(*, "along.timestamp")= logi TRUE - attr(*, "instrument")= logi NA

As described above, pl object is a list of lists: one

list for each instrument. But now the components pl,

realised and so on actually hold time-series, with

the times given by timestamp.

In the previous section, we used vprice to value a

final open position. We can also use it to value a

position over time. See Profit/Loss for Open Positions,

and this example on quant.stackexchange.com.

3.2. More-complicated cases

Unfortunately, in real life computing P/L is often more complicated:

- One asset-price unit may not translate into one currency unit: there may be multipliers a.k.a. contract factors; there are even instruments with variable multipliers, e.g. Australian government-bond futures.

- Asset positions may map into cashflows in non-obvious ways. The simple case is the delay in actual payment and delivery of an asset, which is often two or three days. The more problematic cases are derivatives with daily adjustments of margins. In such cases, one may need to model (i.e. keep track of) the actual account balances.

- Assets may be denominated in various currencies.

- Currencies themselves may be assets in the portfolio. Depending on how they are traded (cash, forwards, &c.), computing P/L may not be straightforward.

How – or, rather, to what degree – these complications are handled is, as always, up to the user. For a single instrument, computing P/L in units of the instrument is usually meaningful, though perhaps not always intuitive. But adding up the profits and losses of several assets will in general not work because of multipliers or different currencies. A simple and transparent way is then to manipulate the journal before P/L is computed (e.g., multiply notionals by their multipliers).

4. Computing returns

4.1. Simple returns

The function returns computes returns from

prices. The function computes what are called simple or

discrete returns:7 let \(P_t\) be the price at point

in time \(t\), then

For computing profit/loss in currency units, see Section Computing profit and (or) loss.

Typically, we transform a whole series \(P_{t_1}, P_{t_2}, P_{t_3}, \ldots\) into returns \(R_{t_2}, R_{t_3}, \ldots\), which is a one-liner in R:

simple_returns <- function(x) x[-1L]/x[-length(x)] - 1

(You may argue that these are two lines: yet even a one-liner, if used repeatedly, should be written as a function.)

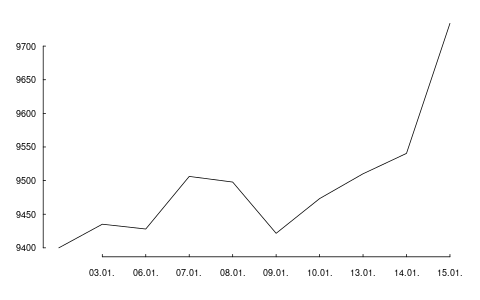

Let us try it. PMwR comes with two small datasets, DAX

and REXP. DAX stands for Deutscher Aktienindex

(German Equity Index), and REXP stands for Rentenindex

(Performance). Both datasets are data-frames of one

column that contains the price for the day, with the

timestamps stored as rownames in format YYYY-MM-DD.

head(DAX)

DAX

2014-01-02 9400.04

2014-01-03 9435.15

2014-01-06 9428.00

2014-01-07 9506.20

2014-01-08 9497.84

2014-01-09 9421.61

We extract the prices for the first five business days of

2014 and put them into a vector P.

P <- head(DAX[[1]], n = 5)

P

[1] 9400.04 9435.15 9428.00 9506.20 9497.84

Now we call simple_returns.

simple_returns(P)

[1] 0.00373509 -0.00075780 0.00829444 -0.00087943

In fact, using returns as provided by PMwR would have given the

same result.

returns(P)

[1] 0.00373509 -0.00075780 0.00829444 -0.00087943

PMwR's returns function offers more convenience than

simple_returns. For instance, it will recognise when

the input argument has several columns, such as a

matrix or a data-frame. In such a case, it computes

returns for each column.8

returns(cbind(P, P))

P P

[1,] 0.003735 0.003735

[2,] -0.000758 -0.000758

[3,] 0.008294 0.008294

[4,] -0.000879 -0.000879

The argument pad determines how the initial

observation is handled. The default, NULL, means that

the first observation is dropped. It is often useful to

use NA instead, since in this way the returns series

keeps the same length as the original price series.

data.frame(price = P, returns = returns(P, pad = NA))

price returns 1 9400.0 NA 2 9435.1 0.00373509 3 9428.0 -0.00075780 4 9506.2 0.00829444 5 9497.8 -0.00087943

Setting pad to 0 can also be useful, because then it is easy to

'rebuild' the original series with cumprod. (But see Section Scaling

series for a description of the function scale1, which is even

more convenient.)

P[1] * cumprod(1 + returns(P, pad = 0))

[1] 9400.04 9435.15 9428.00 9506.20 9497.84

returns also has an argument lag,

with

default 1. This can be used to compute rolling returns,

such as 30-day returns, etc.

returns is a generic function, which goes along with some

overhead. If you need to compute returns on simple data

structures as in the examples above and need fast computation,

then you may also use .returns.

The function is the actual

workhorse that performs the raw return calculation.

Besides having methods for numeric vectors

(which includes those with a dim attribute, i.e. matrices) and

data-frames, returns also understands zoo objects.

So let us create two zoo series, dax and

rex.

library("zoo") dax <- zoo(DAX[[1]], as.Date(row.names(DAX))) rex <- zoo(REXP[[1]], as.Date(row.names(REXP)))

str(dax)

'zoo' series from 2014-01-02 to 2015-12-30 Data: num [1:505] 9400 9435 9428 9506 9498 ... Index: Date[1:505], format: "2014-01-02" "2014-01-03" ...

str(rex)

'zoo' series from 2014-01-02 to 2015-12-30 Data: num [1:502] 441 441 442 442 442 ... Index: Date[1:502], format: "2014-01-02" "2014-01-03" ...

returns(head(dax, 5), pad = NA)

2014-01-02 2014-01-03 2014-01-06 2014-01-07 2014-01-08

NA 0.003735 -0.000758 0.008294 -0.000879

Matrices work as well. We combine both series into a

two-column matrix drax.9

drax <- cbind(dax, rex)

returns(head(drax, 5))

dax rex

2014-01-03 0.003735 0.000611

2014-01-06 -0.000758 0.001704

2014-01-07 0.008294 0.000621

2014-01-08 -0.000879 -0.000131

As you see, just as for a numeric matrix, the function computes the returns for each column.

In fact, zoo objects bring another piece of

information – timestamps – that returns can use.

(Since xts series inherit from zoo, they will work

as well.)

4.2. Holding-period returns

When a timestamp is available, returns can compute returns for

specific calendar periods. As an example, we use the daily

DAX levels in 2014 and 2015 and compute monthly returns from them.

returns(dax, period = "month")

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec YTD

2014 -1.0 4.1 -1.4 0.5 3.5 -1.1 -4.3 0.7 0.0 -1.6 7.0 -1.8 4.3

2015 9.1 6.6 5.0 -4.3 -0.4 -4.1 3.3 -9.3 -5.8 12.3 4.9 -5.6 9.6

If you prefer to not use zoo or xts, you may

also pass the timestamp explicitly to returns.

returns(coredata(dax), t = index(dax), period = "month")

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec YTD

2014 -1.0 4.1 -1.4 0.5 3.5 -1.1 -4.3 0.7 0.0 -1.6 7.0 -1.8 4.3

2015 9.1 6.6 5.0 -4.3 -0.4 -4.1 3.3 -9.3 -5.8 12.3 4.9 -5.6 9.6

Despite the way these monthly returns are printed:

the result of the function call is a numeric vector

(the return numbers), with additional information added

through attributes. There is also a class attribute,

which has value p_returns. The advantage of this data

structure is that it is `natural' to compute with the

returns, e.g. to calculate means, extremes and other

quantities.

range(returns(dax, period = "month"))

[1] -0.0928 0.1232

Most useful, however, is probably the print method,

whose

results you have seen above. You may also compute monthly

returns for matrices,

i.e. for more than one asset. But now the print

method will behave differently. The function's

assumption is that now it would be more convenient to

print the returns aligned by date in a table.

returns(drax, period = "month")

dax rex

2014-01-31 -1.0 1.8

2014-02-28 4.1 0.4

2014-03-31 -1.4 0.1

2014-04-30 0.5 0.3

2014-05-30 3.5 0.9

2014-06-30 -1.1 0.4

2014-07-31 -4.3 0.4

2014-08-29 0.7 1.0

2014-09-30 0.0 -0.1

2014-10-31 -1.6 0.1

2014-11-28 7.0 0.4

2014-12-30 -1.8 1.0

2015-01-30 9.1 0.3

2015-02-27 6.6 0.1

2015-03-31 5.0 0.3

2015-04-30 -4.3 -0.5

2015-05-29 -0.4 -0.2

2015-06-30 -4.1 -0.8

2015-07-31 3.3 0.7

2015-08-31 -9.3 0.0

2015-09-30 -5.8 0.4

2015-10-30 12.3 0.4

2015-11-30 4.9 0.3

2015-12-30 -5.6 -0.6

If you rather wanted the other, one-row-per-year display, just call the function separately for each series.

lapply(list(DAX = dax, REXP = rex),

returns, period = "month")

$DAX

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec YTD

2014 -1.0 4.1 -1.4 0.5 3.5 -1.1 -4.3 0.7 0.0 -1.6 7.0 -1.8 4.3

2015 9.1 6.6 5.0 -4.3 -0.4 -4.1 3.3 -9.3 -5.8 12.3 4.9 -5.6 9.6

$REXP

Jan Feb Mar Apr May Jun Jul Aug Sep Oct Nov Dec YTD

2014 1.8 0.4 0.1 0.3 0.9 0.4 0.4 1.0 -0.1 0.1 0.4 1.0 7.1

2015 0.3 0.1 0.3 -0.5 -0.2 -0.8 0.7 0.0 0.4 0.4 0.3 -0.6 0.5

See ?print.p_returns for more display options. For instance:

print(returns(dax, period = "month"), digits = 2, year.rows = FALSE, plus = TRUE, month.names = 1:12)

2014 2015

1 -1.00 +9.06

2 +4.14 +6.61

3 -1.40 +4.95

4 +0.50 -4.28

5 +3.54 -0.35

6 -1.11 -4.11

7 -4.33 +3.33

8 +0.67 -9.28

9 +0.04 -5.84

10 -1.56 +12.32

11 +7.01 +4.90

12 -1.76 -5.62

YTD +4.31 +9.56

There are methods toLatex and toHTML for monthly

returns. In Sweave documents,

you need to

use results = tex and echo = false in the chunk

options:

\begin{tabular}{rrrrrrrrrrrrrr} <<results=tex,echo=false>>= toLatex(returns(dax, period = "month")) \end{tabular}

(There is also a vignette that gives examples for

toLatex; say vignette("FinTeX", package = "PMwR")

to open it.)

To get annualised returns, use period ann (or actually any

string matched by the regular expression ^ann; case is

ignored).

returns(dax, period = "ann")

6.9% [02 Jan 2014 -- 30 Dec 2015]

Now let us try a shorter period.

returns(window(dax, end = as.Date("2014-1-31")), period = "ann")

-1.0% [02 Jan 2014 -- 31 Jan 2014;

less than one year, not annualised]

The function did not annualise: it refuses to do so

if the time period is shorter than one year. (You may

verify the return for January 2014 in the tables

above.) To force annualising, add a !. The exclamation mark serves as

a mnenomic that it is now imperative to annualise.

returns(window(dax, end = as.Date("2014-1-31")), period = "ann!")

-11.8% [02 Jan 2014 -- 31 Jan 2014;

less than one year, but annualised]

There are several more accepted values for period,

such as year, quarter, month-to-date (mtd), year-to-date

(ytd) or inception-to-date (total). The help page of

returns lists all options.

Note that any

such setting for period requires that the timestamp

can be coerced to Date; for instance, intraday

time-series with POSIXct timestamps would work as

well.

4.3. Portfolio returns

Sometimes we may need to compute returns for a

portfolio of fixed weights, given an assumption when

the portfolio is rebalanced. For instance, we may want

to see how a constant allocation of 10%, 50% and 40%.

to three funds would have done, assuming that a

portfolio is rebalanced once a month.

If more detail is necessary, then function btest can be used;

see Chapter Backtesting. But the simple case can be

done with returns already. Here is an example.

prices <- c(100, 102, 104, 104, 104.5, 2, 2.2, 2.4, 2.3, 2.5, 3.5, 3, 3.1, 3.2, 3.1) dim(prices) <- c(5, 3) prices

[,1] [,2] [,3]

[1,] 100.0 2.0 3.5

[2,] 102.0 2.2 3.0

[3,] 104.0 2.4 3.1

[4,] 104.0 2.3 3.2

[5,] 104.5 2.5 3.1

Now suppose we want a constant weight vector, \([0.1, 0.5, 0.4]'\), but only rebalance at times 1 and 4. (That is, we rebalance the portfolio only with the prices at timestamps 1 and 4.)

returns(prices,

weights = c(10, 50, 40)/100,

rebalance.when = c(1, 4))

[1] -0.0051429 0.0637565 -0.0128240 0.0314590

attr(,"holdings")

[,1] [,2] [,3]

[1,] 0.00100000 0.25000 0.11429

[2,] 0.00100000 0.25000 0.11429

[3,] 0.00100000 0.25000 0.11429

[4,] 0.00096154 0.21739 0.12500

[5,] 0.00096154 0.21739 0.12500

attr(,"contributions")

[,1] [,2] [,3]

[1,] 0.00200000 0.050000 -0.057143

[2,] 0.00201034 0.050258 0.011488

[3,] 0.00000000 -0.023623 0.010799

[4,] 0.00048077 0.043478 -0.012500

The result also contains, as attributes, the imputed holdings and the single period contributions.

Argument weights does not have to be a vector. It can

also be a matrix. In such a case, each row is

interpreted as a portfolio. Instead of weights, we

could also specify fixed positions. See ?returns for

different possibilities to call returns.

4.4. Return contribution

Let \(w(t,i)\) be the weight of portfolio segment \(i\) at the beginning of period \(t\), and let \(r(t,i)\) be the return of segment \(i\) over period \(t\). Then the portfolio return over period \(t\), \(r_{\mbox{\scriptsize P}}(t)\) is a weighted sum of the \(N\) segment returns.

\begin{equation} r_{\mbox{\scriptsize P}}(t) = \sum_{i=1}^{N} r(t,i)w(t,i)\,. \end{equation}When the weights sum to unity, we may also write

\begin{equation} 1 + r_{\mbox{\scriptsize P}}(t) = \sum_{i=1}^{N} \big(1 + r(t,i)\big)w(t,i) \end{equation}or, defining \(1+r \equiv R\),

\begin{equation} R_{\mbox{\scriptsize P}}(t) = \sum_{i=1}^{N} R(t,i)w(t,i)\,. \end{equation}The total return contribution of segment \(i\) over time equals

\begin{equation} \sum_{t=1}^{T-1} \bigg(R(t,i)w(t,i) \prod_{s=t+1}^{T}R_{\mbox{\scriptsize P}}(s) - 1\bigg) + \underbrace{r(T,i)\,w(T,i)}_{\mbox{\scriptsize final period}}\,. \end{equation}In this way, a segment's return contribution in one period is reinvested in the overall portfolio in succeeding periods.

The calculation is provided in the function rc (`return

contribution').

weights <- rbind(c( 0.25, 0.75), ## the assets' weights c( 0.40, 0.60), ## during three periods c( 0.25, 0.75)) R <- rbind(c( 1 , 0), ## the assets' returns c( 2.5, -1.0), ## during these periods c(-2 , 0.5))/100 rc(R, weights, segment = c("equities", "bonds"))

$period_contributions timestamp equities bonds total 1 1 0.0025 0.00000 0.00250 2 2 0.0100 -0.00600 0.00400 3 3 -0.0050 0.00375 -0.00125 $total_contributions equities bonds total 0.00749 -0.00224 0.00525

4.5. Returns when there are external cashflows

External cashflows (or transfers of positions) can be handled just like dividends. The following table shows the values and cashflows of a hypothetical portfolio.

| timestamp | value | cashflow | value with cashflow |

|---|---|---|---|

| 1 | 0 | +100 | 100 |

| 2 | 101 | 0 | 101 |

| 3 | 104 | 0 | 104 |

| 4 | 103 | +100 | 203 |

| 5 | 204 | -200 | 4 |

A total-return series, based on column value with cashflow but excluding column cashflow, can be computed with div\adjust.

cf <- c(100, 100, -200) t <- c(1, 4, 5) x <- c(100, 101, 104, 203, 4) div_adjust(x, t, div = -cf, backward = FALSE)

[1] 100.00 101.00 104.00 103.00 103.51

More conveniently, the function unit_prices helps to compute

so-called time-weighted returns of a portfolio when there are

inflows and outflows.

(The term time-weighted returns is a misnomer, since returns are not weighted at all. They are only time-weighted if time-periods are of equal length.) We repeat the previous example.

NAV <- data.frame(timestamp = 1:5, NAV = x) cf <- data.frame(timestamp = t, cashflow = cf) unit_prices(NAV, cf)

timestamp NAV price units 1 1 100 100.00 1.000000 2 2 101 101.00 1.000000 3 3 104 104.00 1.000000 4 4 203 103.00 1.970874 5 5 4 103.51 0.038645

The function returns a data-frame: to compute returns, use the

price column.

5. Backtesting

This chapter explains how to test trading strategies with the btest function. A recent tutorial is available from SSRN.

5.1. Decisions

At a given instant in time (in actual life, `now'), a trader needs to answer the following questions:

- Do I want to compute a new target portfolio, yes or no? If yes, go ahead and compute the new target portfolio.

- Given the target portfolio and the actual portfolio, do I want to rebalance (i.e. close the gap between the actual portfolio and the target portfolio)? If yes, rebalance.

If such a decision is not just hypothetical, then the answer to the second question may lead to a number of orders sent to a broker. Note that many traders do not think in terms of stock (i.e. balances) as we did here; rather, they think in terms of flow (i.e. orders). Both approaches are equivalent, but the described one makes it easier to handle missed trades and synchronise accounts.

During a backtest, we will simulate the decisions of the trader. How

precisely we simulate depends on the trading strategy. The btest

function is meant as a helper function to simulate these decisions.

The logic for the decisions described above must be coded in the functions

do.signal, signal and do.rebalance.

Implementing btest required a number of decision too:

(i) what to model (i.e. how to simulate the trader), and

(ii) how to code it. As an example for point (i):

how precisely do we want to model the order process (e.g. use

limit orders?, allow partial fills?) Example for (ii): the

backbone of btest is a loop that runs through the data. Loops

are slow in R when compared with compiled languages,10 so should we

vectorise instead? Vectorisation is indeed often possible,

namely if trading is not path-dependent. If we have already a

list of trades, we can efficiently transform them into a

profit-and-loss in R without relying on an explicit loop (see

Section Computing profit and (or) loss). Yet, one advantage of

looping is that the trade logic is more similar to actual

trading; we may even be able to reuse some code in live trading.

Altogether, the aim for btest is to stick to the functional paradigm as much as

possible. Functions receive arguments and evaluate to results; but

they do not change their arguments, nor do they assign or change other

variables `outside' their environment, nor do the results depend on

some variable outside the function. This creates a problem, namely

how to keep track of state. If we know what variables need to be

persistent, we could pass them to the function and always have them returned.

But we would like to be more flexible, so we can pass an

environment; examples are below. To make that clear: functional

programming should not be seen as a yes-or-no decision; it is a

matter of degree. And more of the functional approach can help

already.

5.2. Data structure

All computations of btest will be based on one or

several price series of length T. Internally, these

prices are stored in numeric matrices.

Prices are passed as argument prices. For a single

asset, this must be a matrix of prices with four

columns: open, high, low and close.

For n assets, you need to pass a list of length

four: prices[[1]] must be a matrix with n columns

containing the open prices for the assets;

prices[[2]] is a matrix with the high prices, and so

on. For instance, with two assets, you need four

matrices with two columns each:

open high low close +-+-+ +-+-+ +-+-+ +-+-+ | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | | +-+-+ +-+-+ +-+-+ +-+-+

If only close prices are used, then for a single asset,

use either a matrix of one column or a numeric vector. For

multiple assets a list of length one must be passed,

containing a matrix of close prices. For example,

with 100 close prices of 5 assets, the prices should be

arranged in a matrix p of size 100 times 5; and

prices = list(p).

The btest function runs from b+1 to T. The

variable b is the burn-in and it needs

to be a positive integer. When we take decisions that

are based on past data, we will lose at least one data

point. In rare cases b may be zero.

Here is an important default: at time =t=, we can

use information up to time t-1. Suppose that t

were 4. We may use all information up to

time 3, and trade at the open in

period 4:

t time open high low close 1 HH:MM:SS <--\ 2 HH:MM:SS <-- - use information 3 HH:MM:SS _________________________ <--/ 4 HH:MM:SS X <- trade here 5 HH:MM:SS

We could also trade at the close:

t time open high low close 1 HH:MM:SS <-- \ 2 HH:MM:SS <-- - use information 3 HH:MM:SS _________________________ <-- / 4 HH:MM:SS X <-- trade here 5 HH:MM:SS

No, we cannot trade at the high or low. (Some people like the idea, as a robustness check, to always buy at the high, sell at the low. Robustness checks – forcing a bit of bad luck into the simulation – are a good idea, notably bad executions. High/low ranges can inform such checks, but using these ranges does not go far enough, and is more of a good story than a meaningful test.)

5.3. Function arguments

5.3.1. Available information within functions

btest expects as arguments a number of functions,

such as signal; see the next section for a

complete list. The default is to specify no arguments

to these functions, because they can all access the

following `objects'. These objects actually are, with

the exception of Globals, themselves functions that

can access certain data. These functions can only read;

there are no replacement functions. The exception is

Globals, which is an environment, and which can

explicitly be used for writing (i.e. storing data).

- Open

- open prices

- High

- high prices

- Low

- low prices

- Close

- close prices

- Wealth

- the total wealth (cash plus positions) at a given point in time

- Cash

- cash (in accounting currency)

- Time

- current time (an integer)

- Timestamp

- the

timestampwhen that is specified (i.e. when the argumenttimestampis supplied); if not, it defaults toTime - Portfolio

- the current portfolio

- SuggestedPortfolio

- the currently-suggested portfolio

- Globals

- an environment (not a function)

All functions take as their first argument a lag,

which defaults to 1. So to get the most recent close

price, say

Close()

which is the same as Close(lag = 1).

The lag can be a vector, too: the expression

Close(Time():1)

for instance will return all available close prices. So in period 11, say, you want close prices for lags 10, 9, …, 1. Hence, to receive prices in their correct order, the lag sequence must always be in reverse order.

If you find it awkward to specify the lag in this

reverse order, you may use the argument n instead,

which specifies to retrieve the last n data

points. So the above Close(Time():1) is equivalent to

Close(n = Time())

and saying

Close(n = 10)

will get you the last ten closing prices in their actual temporal order.

5.3.2. Function arguments

signal- The function

signaluses information until and includingt-1and returns the suggested portfolio (a vector) to be held att. This position should be in units of the instruments; if you prefer to work with weights, then you should setconvert.weightstoTRUE. Then, the value returned bysignalwill be interpreted as weights and will be automatically converted to position sizes. do.signaldo.signaluses information until and includingt-1and must returnTRUEorFALSEto indicate whether a signal (i.e. new suggested position) should be computed. This is useful when the signal computation is costly and only be done at specific points in time. If the function is not specified, it defaults tofunction() TRUE. Instead of a function, this may also be- a vector of integers, which then indicate the points in time when to compute a position; or

- a vector of logical values, which then indicate the points in time when to compute a position; or

- a vector that inherits from the class of

timestamp(e.g.Date); or - one of the keywords

firstofmonth,lastofmonth,firstofuqarterorlastofmonth. In this case,timestampmust inherit fromDateor be coercible toDate. (Options can easily be specified with function nth\day in package datetimeutils.)

do.rebalance- just like

do.signal, but refers to the actual trading. If the function is not specified, it defaults tofunction() TRUE. Note that rebalancing can typically not take place at a higher frequency than implied bysignal. That is because callingsignalleads to a position, and when this position does not change (i.e.signalwas not called), there is actually no need to rebalance. Sodo.rebalanceis normally used when rebalancing should be done less often that signal computation, e.g. when the decision whether to trade or not is conditional on something.

print.info- The function is called at the end of an iteration. Whatever it returns will be ignored since it is called for its side effect: print information to the screen, into a file or into some other connection.

cashflow- The function is called at the end of

each iteration; its value is added to

the

cash. The function provides a clean way to, for instance, add accrued interest to or subtract fees from a strategy.

5.4. Examples: A single asset

It is best to describe the btest function through

a number of simple examples.

5.4.1. A useless first example

I really like simple examples. Suppose we have a single instrument, and we use only close prices. The trading rule is to buy, and then to hold forever. All we need is the time series of the prices and the signal function. As an instrument we use the EURO STOXX 50 future with expiry September 2015.

timestamp <- structure(c(16679L, 16680L, 16681L, 16682L, 16685L, 16686L, 16687L, 16688L, 16689L, 16692L, 16693L), class = "Date") prices <- c(3182, 3205, 3272, 3185, 3201, 3236, 3272, 3224, 3194, 3188, 3213) data.frame(timestamp, prices)

timestamp prices

1 2015-09-01 3182

2 2015-09-02 3205

3 2015-09-03 3272

4 2015-09-04 3185

5 2015-09-07 3201

6 2015-09-08 3236

7 2015-09-09 3272

8 2015-09-10 3224

9 2015-09-11 3194

10 2015-09-14 3188

11 2015-09-15 3213

The signal function is very simple indeed.

signal <- function() 1

signal must be written so that it returns the suggested

position in units of the asset. In this first example, the suggested

position always is 1 unit. It is only a suggested portfolio

because we can specify rules whether or not to trade. Examples follow

below.

To test this strategy, we call btest. The initial cash is

zero per default, so initial wealth is also zero in this case. We can

change it through the argument initial.cash.

(solution <- btest(prices = prices, signal = signal))

initial wealth 0 => final wealth 8

The function returns a list with a number of components, but they are not printed. Instead, a simple print method displays some information about the results. In this case, it tells us that the total equity of the strategy increased from 0 to 8.

We arrange more details into a data.frame. suggest is the

suggested position; position is the actual position.

trade_details <- function(solution, prices) data.frame(price = prices, suggest = solution$suggested.position, position = unname(solution$position), wealth = solution$wealth, cash = solution$cash) trade_details(unclass(solution), prices)

price suggest position wealth cash 1 3182 0 0 0 0 2 3205 1 1 0 -3205 3 3272 1 1 67 -3205 4 3185 1 1 -20 -3205 5 3201 1 1 -4 -3205 6 3236 1 1 31 -3205 7 3272 1 1 67 -3205 8 3224 1 1 19 -3205 9 3194 1 1 -11 -3205 10 3188 1 1 -17 -3205 11 3213 1 1 8 -3205

We bought in the second period because the default

setting for the burnin b is 1. Thus, we lose one

observation. In this particular case here, we do not

rely in any way on the past; hence, we set b to

zero. With this setting, we buy at the first price and

hold until the end of the data.

solution <- btest(prices = prices, signal = signal,

b = 0)

trade_details(solution, prices)

price suggest position wealth cash 1 3182 1 1 0 -3182 2 3205 1 1 23 -3182 3 3272 1 1 90 -3182 4 3185 1 1 3 -3182 5 3201 1 1 19 -3182 6 3236 1 1 54 -3182 7 3272 1 1 90 -3182 8 3224 1 1 42 -3182 9 3194 1 1 12 -3182 10 3188 1 1 6 -3182 11 3213 1 1 31 -3182

If you prefer the trades only, i.e. not the position

series, the solution also contains a journal. (See

Keeping track of transactions: journals for more on

journals.)

journal(solution)

instrument timestamp amount price 1 asset 1 1 1 3182 1 transaction

To make the journal more informative, we can pass timestamp and

instrument information when we call btest.

journal(btest(prices = prices, signal = signal, b = 0,

timestamp = timestamp, ## defined above,

## together with prices

instrument = "FESX SEP 2015"))

instrument timestamp amount price

1 FESX SEP 2015 2015-09-01 1 3182

1 transaction

Before we go to the next examples, a final remark, on

data frequency. I have used daily data here, but any

other frequency, also intraday data, is fine. btest

will not care of what frequency your data are or

whether your data are regularly spaced; it will only

loop over the observations that it is given. It is your

own responsibility to write signal (and other

functions) in such a way that they encode a meaningful

trade logic.

5.4.2. More-useful examples

Now we make our strategy slightly more selective. The

trading rule is to have a position of 1 unit of the

asset whenever the last observed price is below 3200

and to have no position when it the price is above 3200.

The signal function could look like this.

signal <- function() { if (Close() < 3200) 1 else 0 }

If you like to write clever code, you may as well have written this:

signal <- function() Close() < 3200

The logical value of the comparison Close() < 3200 would

be converted to either 0 or 1. But the more verbose version

above is clearer.11

We call btest and check the results.

solution <- btest(prices = prices, signal = signal)

trade_details(solution, prices)

price suggest position wealth cash 1 3182 0 0 0 0 2 3205 1 1 0 -3205 3 3272 0 0 67 67 4 3185 0 0 67 67 5 3201 1 1 67 -3134 6 3236 0 0 102 102 7 3272 0 0 102 102 8 3224 0 0 102 102 9 3194 0 0 102 102 10 3188 1 1 102 -3086 11 3213 1 1 127 -3086

(Yes, this strategy works better than the simple buy-and-hold, but I hope you agree that this is only because of luck.)

The argument initial.position specifies the initial

position; default is no position. Suppose we had already

held one unit of the asset.

solution <- btest(prices = prices, signal = signal,

initial.position = 1)

Then the results would have looked as follows.

trade_details(solution, prices)

price suggest position wealth cash 1 3182 1 1 3182 0 2 3205 1 1 3205 0 3 3272 0 0 3272 3272 4 3185 0 0 3272 3272 5 3201 1 1 3272 71 6 3236 0 0 3307 3307 7 3272 0 0 3307 3307 8 3224 0 0 3307 3307 9 3194 0 0 3307 3307 10 3188 1 1 3307 119 11 3213 1 1 3332 119

In the example above, we use the close price, but we do not

access the data directly. A function Close is defined by

btest and passed as an argument to signal. Note that we

do not add it as a formal argument to signal since this is

done automatically. In fact, doing it manually would trigger

an error message:

signal <- function(Close = NULL) ## ERROR: argument name 1 ## 'Close' not allowed

Error in btest(prices = prices, signal = signal) : 'Close' cannot be used as an argument name for 'signal'

Similarly, we have functions Open, High and Low; see

Section 5.3 above for all functions.

Suppose we wanted to add a variable: a threshold that

tells us when to buy. This would need to be an argument to

signal; it would also need to be passed with the

... argument of btest.

signal <- function(threshold) { if (Close() < threshold) 1 else 0 } solution <- btest(prices = prices, signal = signal, threshold = 3190) trade_details(solution, prices)

price suggest position wealth cash 1 3182 0 0 0 0 2 3205 1 1 0 -3205 3 3272 0 0 67 67 4 3185 0 0 67 67 5 3201 1 1 67 -3134 6 3236 0 0 102 102 7 3272 0 0 102 102 8 3224 0 0 102 102 9 3194 0 0 102 102 10 3188 0 0 102 102 11 3213 1 1 102 -3111

So far we have treated Close as a function without arguments,

but actually it has an argument lag that defaults to

1. Suppose the rule were to buy if the last close is below the

second-to-last close. signal could look like this.

signal <- function() { if (Close(1L) < Close(2L)) 1 else 0 }

We could also have written (Close() < Close(2L)). In any

case, the rule uses the close prices of yesterday and

of the day before yesterday, so we need to increase b.

trade_details(btest(prices = prices, signal = signal, b = 2),

prices)

price suggest position wealth cash 1 3182 0 NA NA 0 2 3205 0 0 0 0 3 3272 0 0 0 0 4 3185 0 0 0 0 5 3201 1 1 0 -3201 6 3236 0 0 35 35 7 3272 0 0 35 35 8 3224 0 0 35 35 9 3194 1 1 35 -3159 10 3188 1 1 29 -3159 11 3213 1 1 54 -3159

If we want to trade a different size, we have signal

return the desired value.

signal <- function() if (Close() < 3200) 2 else 0 trade_details(btest(prices = prices, signal = signal), prices)

price suggest position wealth cash 1 3182 0 0 0 0 2 3205 2 2 0 -6410 3 3272 0 0 134 134 4 3185 0 0 134 134 5 3201 2 2 134 -6268 6 3236 0 0 204 204 7 3272 0 0 204 204 8 3224 0 0 204 204 9 3194 0 0 204 204 10 3188 2 2 204 -6172 11 3213 2 2 254 -6172

A often-used way to specify a trading strategy is to

map past prices into +1, 0 or -1 for long, flat

or short. A signal is often only given at a specified

point (like in `buy one unit now'). Example: suppose

the third day is a Thursday, and our rule says `buy

after Thursday'.

signal <- function() if (Time() == 3L) 1 else 0 trade_details(btest(prices = prices, signal = signal), prices)

price suggest position wealth cash 1 3182 0 0 0 0 2 3205 0 0 0 0 3 3272 0 0 0 0 4 3185 1 1 0 -3185 5 3201 0 0 16 16 6 3236 0 0 16 16 7 3272 0 0 16 16 8 3224 0 0 16 16 9 3194 0 0 16 16 10 3188 0 0 16 16 11 3213 0 0 16 16

But this is not what we wanted. If the rule is to buy and then keep the long position, we should have written it like this.

signal <- function() if (Time() == 3L) 1 else Portfolio()

The function Portfolio evaluates to last period's

portfolio. Like Close, its first argument sets the time

lag, which defaults to 1.

trade_details(btest(prices = prices, signal = signal), prices)

prices sp asset.1 wealth cash 1 3182 0 0 0 0 2 3205 0 0 0 0 3 3272 0 0 0 0 4 3185 1 1 0 -3185 5 3201 1 1 16 -3185 6 3236 1 1 51 -3185 7 3272 1 1 87 -3185 8 3224 1 1 39 -3185 9 3194 1 1 9 -3185 10 3188 1 1 3 -3185 11 3213 1 1 28 -3185

We may also prefer to specify signal so that it evaluates

to a weight; for instance, after a portfolio

optimisation. In such a case, you need to set

convert.weights to TRUE. (Make sure to have a meaningful

initial wealth: 5 percent of nothing is nothing.)