Portfolio selection with higher moments

This note was motivated by a question on Stack Exchange. We will look at selecting a portfolio based on mean, variance and skewnewss of its returns: the aim is to find a portfolio that minimises

\[ -\mathrm{mean}(x) + \gamma_1 \mathrm{variance}(x) - \gamma_2 \mathrm{skewness}(x) \]

in which x are the portfolio weights, and mean() and so on are functions that return the moments of the portfolio return. Note that typical investors like mean and skewness, and they dislike variance. But since we minimise, we invert the signs of those functions.

Suppose we are given a set of return scenarios, collected in a matrix R. Each column holds the returns of a particular asset; each row holds the returns of all assets in one scenario. Mean and variance of the assets' return we could easily get from R via the column means m and the variance-covariance matrix S. To arrive at the portfolio mean and variance, we then multiply those summary statistics with the weights (i.e. m'x and x'Sx). It turns out that we can do something similar with skewness and kurtosis; a concise summary of the formulas can for instance be found in the first section of Portfolio Decisions with Higher Order Moments. The trouble with that approach is that it is cumbersome and scales badly, since the necessary arrays get very large.

So we'll instead handle the model with scenario optimisation (see Gilli et al., 2019, chapter 14, for more details). For scenarios, we use a set of industry-portfolio returns: time-series of industry portfolios from Kenneth French's website at https://mba.tuck.dartmouth.edu/pages/faculty/ken.french/ The dataset comprises 30 series of monthly data, and we use a subset that starts in January 2010. (But note that practically, using historical data as scenarios may not be advisable; see e.g. Gilli et al., 2011.)

library("NMOF") library("PMwR") library("zoo") P <- French(dest.dir = tempdir(), "30_Industry_Portfolios_CSV.zip", price.series = TRUE, na.rm = TRUE, frequency = "monthly") P <- zoo(P, as.Date(row.names(P))) P <- window(P, start = as.Date("2010-1-1")) R <- returns(P) str(P) str(R)

‘zoo’ series from 2010-01-31 to 2020-09-30 Data: num [1:129, 1:30] 5095 5243 5476 5396 5106 ... - attr(*, "dimnames")=List of 2 ..$ : chr [1:129] "2010-01-31" "2010-02-28" "2010-03-31" "2010-04-30" ... ..$ : chr [1:30] "Food" "Beer" "Smoke" "Games" ... Index: Date[1:129], format: "2010-01-31" "2010-02-28" "2010-03-31" ... ‘zoo’ series from 2010-02-28 to 2020-09-30 Data: num [1:128, 1:30] 0.0292 0.0444 -0.0146 -0.0537 -0.0197 ... - attr(*, "dimnames")=List of 2 ..$ : chr [1:128] "2010-02-28" "2010-03-31" "2010-04-30" "2010-05-31" ... ..$ : chr [1:30] "Food" "Beer" "Smoke" "Games" ... Index: Date[1:128], format: "2010-02-28" "2010-03-31" "2010-04-30" ...

Let us start with a simpler model, as a benchmark:

minimising portfolio variance. A few constraints: full

investment, no short-sales, maximum position weight is

20%. We'll solve the model with function minvar in

the NMOF package.

Sigma <- cov(R) x.mv <- minvar(Sigma, wmax = 0.2) attr(x.mv, "variance")

[1] 0.0009141

The variance is computed from monthly returns; let us calculate a corresponding annual volatility.

sqrt(attr(x.mv, "variance")) * sqrt(12)

[1] 0.1047

Now, how would we handle this with scenario

optimisation? We have already two ingredients: the

scenario set R and a way to represent a solution (a

numeric vector x). Next, we need an objective

function. It maps a portfolio x into a real number.

The lower this number, the better (recall that we

minimise). The function p.var computes portfolio

variance for a portfolio x, given a scenario set R.

p.var <- function(x, R, ...) { Rx <- c(R %*% x) var(Rx) }

Let us try the function. No surprise: with the minimum-variance portfolio that we computed before, we get the same numeric result.

p.var(x.mv, R)

[1] 0.0009141

Note that we did not compute x'Sx. Instead the function first computed Rx, i.e. the portfolio returns under the scenarios. The portfolio returns Rx are a univariate sample, so it is easy to evaluate any objective function on them.

The final ingredient is a method that evolves one or

perhaps several initial solutions into the optimal –

or at least a very good – one. For this note, we'll

use a method called Threshold Accepting (TA). In fact,

for this particular model, we could use a simpler

method. But TA's advantage is its robustness when it

comes to possible alternative objective functions. TA's

only requirement is that the chosen objective function

can be evaluated for a given portfolio x; it has no

requirements concerning the shape or other properties

of the objective function. The method is described in

much detail in Gilli et al. (2019). We use the

implementation in function TAopt in the NMOF

package.

library("neighbours") ## https://github.com/enricoschumann/neighbours nb <- neighbourfun(type = "numeric", max = 0.2, stepsize = 0.2/100) algo.settings <- list(nI = 20000, ## number of iterations x0 = rep(1/ncol(R), ncol(R)), ## initial solution neighbour = nb) sol <- TAopt(p.var, algo = algo.settings, R = R) sol$xbest ## the best portfolio found by TA sol$OFvalue ## the objective function of this portfolio

[1] 0.0009141

We get the same result as with function minvar. Now

we can move to the actual problem. All we need is a

new objective function. Since R (the language, not the

matrix) does not have a built-in skewness function, we

use the one provided by package e1071.

mom3 <- function(x, R, gamma) { Rx <- c(R %*% x) -mean(Rx) + gamma[1]*var(Rx) - gamma[2]*e1071::skewness(Rx) }

We run the algorithm, for some value of \(\gamma\).

sol.mom3 <- TAopt(mom3,

algo = algo.settings,

R = R,

gamma = c(1, 1))

sol.mom3$OFvalue

[1] -0.3638

For this model, we do not have a benchmark

implementation. So it becomes more difficult to judge

the quality of the solution. In particular, since TA

is a stochastic algorithm, so repeated runs of the

algorithm may result in different solutions. TAopt

simply runs a loop with a fixed number of iterations,

for which I have chosen 20,000. How could I know that

this was an appropriate number? I couldn't. Instead, I

ran a number of small experiments: I restarted the

algorithm a number of times (in the code below, 50

times) with different numbers of iterations. For all

runs, I recorded the objective-function value of the

solution. (See http://enricoschumann.net/R/remarks.htm

for a lengthier discussion.)

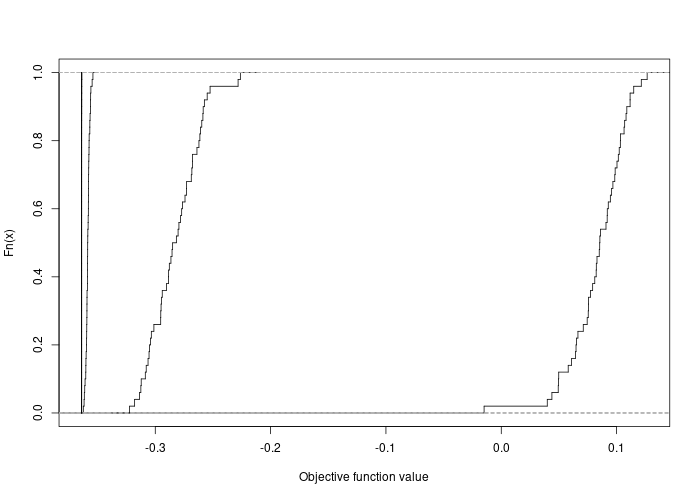

n <- 50 ## number of restarts iterations <- c(2000, 5000, 10000, 20000, 30000, 50000) restarts <- vector("list", length(iterations)) names(restarts) <- as.character(iterations) for (i in iterations) { algo.settings$nI <- i r <- restartOpt(TAopt, n = n, OF = mom3, algo = algo.settings, R = R, gamma = c(1, 1)) restarts[[ as.character(i) ]] <- sapply(r, `[[`, "OFvalue") } for (i in seq_along(iterations)) { if (i == 1) plot(ecdf(restarts[[i]]), xlim = range(restarts), do.points = FALSE, verticals = TRUE, main = "", xlab = "Objective function value") else lines(ecdf(restarts[[i]]), do.points = FALSE, verticals = TRUE) }

The figure shows the empirical distribution functions of the objective-function values for 2000, 5000, … iterations. There is not even a need to label them. With increasing numbers of iterations, the distributions move to the left and become steeper (i.e. solutions become better and less variable). For 20000 iterations and more, one can hardly distinguish betweens the distribution functions any more. In other words, every restart of the algorithm returned, in terms of solution quality, the same solution.

@ARTICLE{,

author = {Manfred Gilli and Enrico Schumann and

Giacomo {di Tollo} and Gerda Cabej},

title = {Constructing 130/30-Portfolios with the {O}mega Ratio},

journal = {Journal of Asset Management},

year = 2011,

volume = 12,

pages = {94--108},

number = 2

doi = {10.1057/jam.2010.25}

}

@BOOK{,

author = {Gilli, Manfred and Maringer, Dietmar and Schumann, Enrico},

title = {Numerical Methods and Optimization in Finance},

publisher = {Elsevier/Academic Press},

year = 2019,

edition = 2,

url = {http://nmof.net},

doi = {10.1016/C2017-0-01621-X}

}